December 10, 2015

Mowat has long championed impact measurement and evidence-based policymaking for government.

Given our own organizational aspiration is to have a meaningful impact on our province and country through our research, we felt strongly that we should also try to quantify and measure that impact. Because we advocate impact measurement and evaluation for government and other organizations, we wanted to put ourselves to the same test. The result was an impact report that examined Mowat’s first five years.

Here we would like to share our approach, methodology, and some key insights we have learned in the process of collecting, tracking, analyzing, and reporting our impact.

If the point of Mowat research is to influence government policy, the ultimate outcome in our case would be the implementation of policy we have advocated. Such an evaluation is very difficult. But we decided to try.

There are existing frameworks and methodologies for measuring think tank performance, and there are annual rankings of think tanks that try to capture impact, especially among global development think tanks addressing key policy issues like poverty. They often focus on public profile metrics like website analytics, social media following and engagement, report downloads, or news media engagement. Some add numbers of events held, talks given, or research references in scholarly or stakeholder literature.

These quantitative metrics are useful to assess a think tank’s public presence and ability to inform the broader public policy conversation. And we measured these things for that reason.

Our approach

Influencing the public conversation

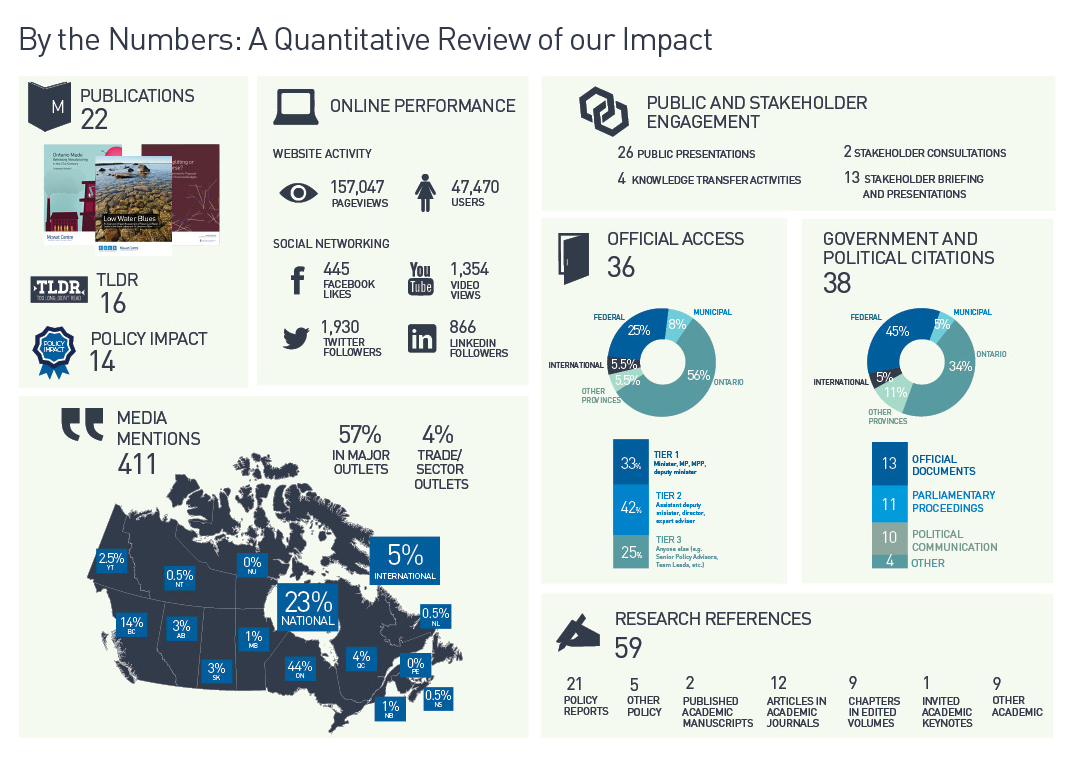

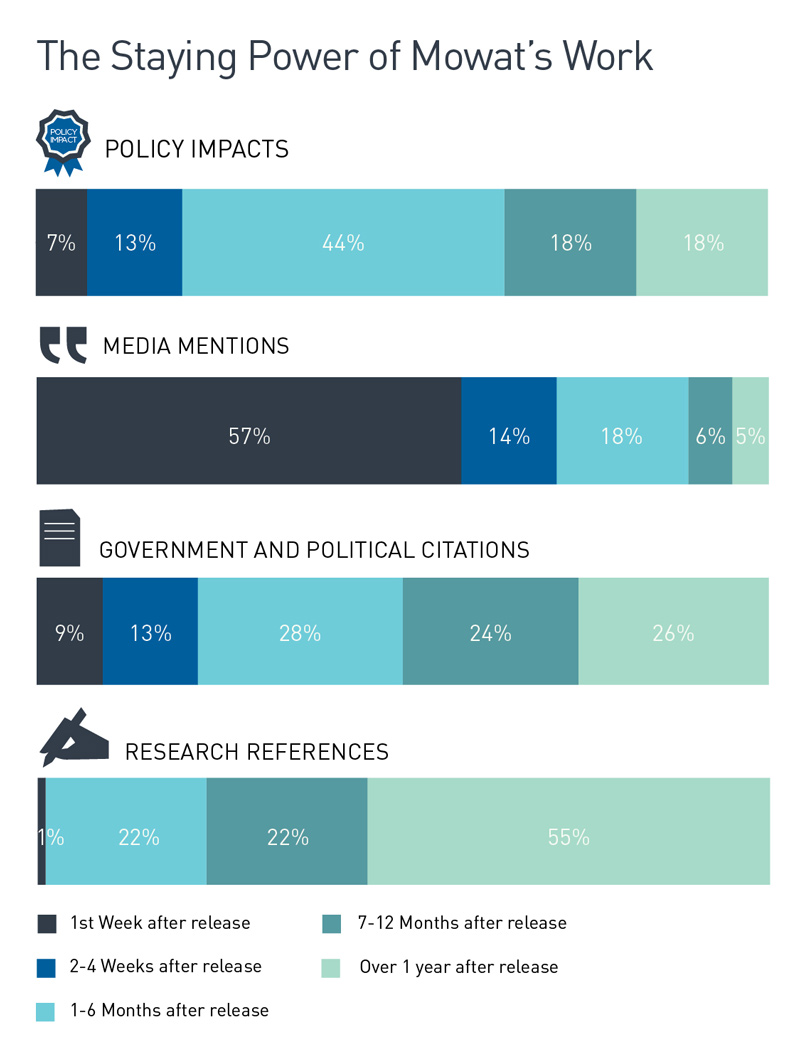

In assessing the extent of our influence on the broader public policy conversation we asked ourselves what was our public profile, and to what extent was our work cited or relied upon by media as well as the policy research community.

To assess this we use many of the more traditional metrics used by other organizations:

Media mentions

We track our mentions in news media (print, broadcast, radio and online), including magazines and trade publications, using a paid media monitoring service. We analyze whether mentions are in major or non-major media outlets, where they are from (national or local media, which provinces/regions etc.), and the extent to which media are referring back to our research after the initial release.

Website activity and social media

We track our annual growth in website pageviews and users, Twitter followers, Facebook likes and downloads from our YouTube channel. As of 2014 we are also tracking LinkedIn followers.

Research references

We track references in peer-reviewed academic sources (books, chapters in edited volumes, refereed journal articles, invited keynotes), other academic sources (academic conference papers, PhD Dissertations, publicly available working/discussion papers), policy reports (from other think tanks, foundations, consultancies, advocacy organizations), and other policy work (factsheets, reviews, keynotes, etc.). We have the capability to break this data down by a variety of factors, for example the geographic origin of the reports or of their authors (based on affiliation).

But quantitative metrics capture our outputs, not our impact on outcomes. We wanted to go beyond this; to do a richer evaluation of our impact. If the point of Mowat research is to influence government policy, the ultimate outcome in our case would be the implementation of policy we have advocated. Such an evaluation is very difficult. But we decided to try.

Influencing Policymaking

In assessing our policymaking influence we asked ourselves: What government policies could we plausibly take credit for? How much influence do we have? How in demand is our work among policymakers?

To answer these questions we track:

- Citations in official government documents (budgets, statements, strategies, reports, interviews by government officials etc.)

- Parliamentary proceedings (floor and committee)

- Political communications (party releases and proposals and elected officials’ statements, interviews, op-eds and blog posts)

- Official access (instances where our researchers briefed officials on our research findings and recommendations)

These indicators show us the extent to which our work is getting on the radar of public officials and policymakers. We can even get a more nuanced picture of this by breaking down the data we collected across parameters such as levels of government and jurisdictions, or in the case of official access the level and seniority of the officials we briefed.

But we quickly realized that to identify, track and demonstrate our actual policy impacts we needed to combine this data with a qualitative approach. Because government policymaking is often a synthesis of multiple sources, and credit for policy is usually ascribed to the government of the day , drawing a direct line from our work to the final policy result can be challenging at times. And we have no interest in exaggerating our influence or taking credit for decisions when our impact was marginal or additive at best.

To identify our actual policy impacts – instances where we could demonstrate that our work likely had a clear influence on policymaking, we take three approaches:

-

Look for direct attributions

We look for instances in which official government policy documents or statements from government officials directly cited our work.

These have included, among others, budget documents, economic updates and throne speeches, official strategies, press releases, and public statements or interviews by government officials reported in the media.

-

Look for ‘one-step-removed’ citations

We look at documents that served as inputs into policymaking, or statements from officials as part of the policymaking process, that cite our work.

We assess whether the Mowat proposals/positions that were cited were incorporated into the final policy. We also assess whether the source citing us was a critical input into the policy or represented the government’s position on the matter.

For example, during legislative debate on the federal government’s electoral reform bills in 2010-2012, some of the government’s leading speakers in favour of the legislation drew on our work to justify the reform’s goals and principles, which were indeed reflected in the eventual legislation.

-

Look for policies reflecting our work

In some cases, government policies reflect our proposals without direct or ‘one-step-removed’ links. Of course, that a policy reflects our work does not in itself mean that our work had actually influenced that policy. So whenever we find such cases we look for additional evidence that would support a plausible attribution of the policy to Mowat from anywhere in our data or the history of the research project.

For example, when Ontario revamped its Partnership Grant Program, a major funding source for Ontario’s not-for-profit sector, some of the new or revised requirements reflected recommendations made by Mowat NFP. While Mowat NFP was not directly attributed, those recommendations were made in a report commissioned by the Ontario government to review and assess the program, so our claim to have influenced it was, we felt, reasonable.

Official access information is particularly helpful here. For example, our 2011 proposed principle for determining Quebec’s share of an expanded House of Commons was included in a federal reform proposal shortly after we briefed the minister in charge of the reform on it. Combined with the fact that the proposal was unique to Mowat, we felt confident we had influenced the policy once the reform was approved by the legislature.

What have we learned?

Assessing our impact is an ongoing learning experience and therefore also a work in progress. Here are some key lessons we have learned so far from our impact measurement work:

Be strategic

Be clear about what you are measuring

Part of Mowat’s mission is to work to “strengthen Ontario and Canada in a rapidly changing world.” But how do you measure that? This is really the ultimate outcome to which we want to contribute. But it’s practically impossible to trace our impact against it. Rather than get bogged down, we realized that it would be simpler, more practical, and more instructive to focus on influencing policymaking. It’s much easier to track measurable indicators of our influence on policymaking and public policy conversations.

Put your money where your mouth is

Rigorously tracking, analyzing and demonstrating your impact can’t be just done on the fly, without appropriate investment. Proper tracking and analysis takes time. Some data, for example media mentions, are best obtained using subscription-based media monitoring services. It isn’t enough to set up Google Alerts. Staff dedicated to this work need to have a good understanding of the organization’s different substantive streams/departments, as they will have to exercise judgment about what data related to different files means.

Ensure organization-wide buy-in

Some impact data – for example, data regarding meetings with officials and stakeholders or regarding public presentations – can only be provided by other members of your team. Make sure your policy staff in particular understand why this work is important and why their help is valuable – but also try to not rely solely on them.

Think tanks play an increasingly vital role in policymaking and policy conversations across Canada. Their best work delivers a high premium to the public and to policymakers through evidence-based research and innovative policy solutions. Assessing their policy influence and learning from this self-assessment is integral to think tanks’ ability to deliver on their policy-change mandates.

Applying outcome-based impact evaluation to our own work is new for us. Yet we believe we have taken some significant and instructive first steps. We share our methodology and lessons learned in the hope that others may find them useful. And we look forward to your comments and suggestions as we strive to further improve our own impact measurement work going forward. Feel free to send us your thoughts to info@mowatcentre.ca.

More related to this topic

Author

Reuven Shlozberg

Release Date

Dec 10, 2015